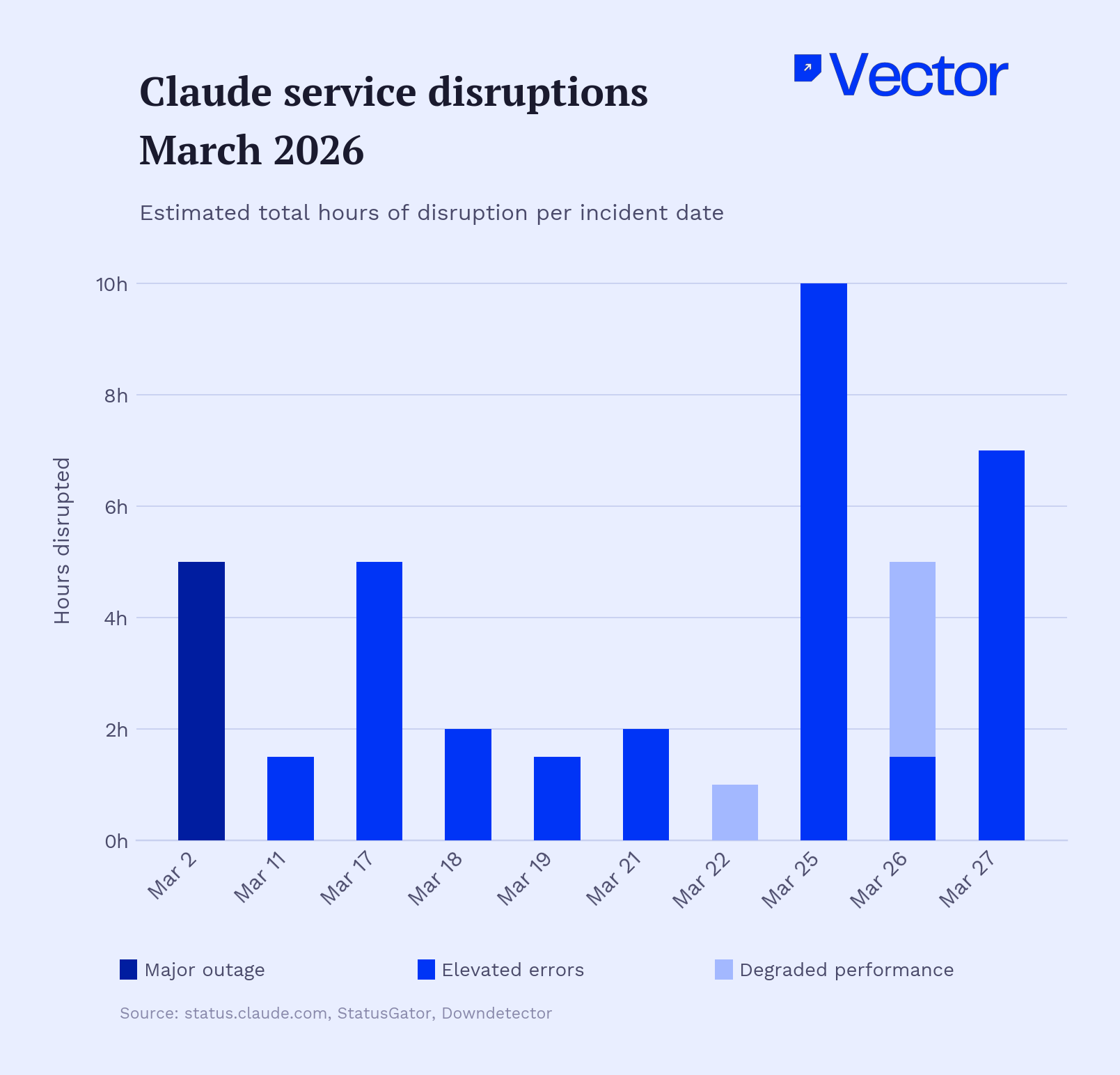

THE STATUS PAGE for Anthropic's Claude has been unusually busy this month. On March 2, the chatbot suffered a global outage just days after climbing to the top of the App Store — a textbook case of what engineers grimly call "success tax." What began as elevated error rates escalated into a prolonged outage affecting web access, authentication, and model endpoints for several hours. Users were left unable to access the chatbot through its web interface, mobile apps, and coding tools, though the core API remained functional for developers with direct integrations. Then it happened again. And again. Across March, Claude logged at least ten distinct service disruptions, ranging from brief authentication hiccups to a brutal seven-hour stretch of elevated errors on Opus 4.6 on March 25 that sent more than 4,000 Downdetector reports flooding in.

Anthropic is not uniquely afflicted. OpenAI's ChatGPT suffered back-to-back outages on February 3 and 4, with over 28,000 Downdetector reports on the first day and more than 24,000 on the second. In the last 90 days alone, ChatGPT has logged 53 incidents — one major outage and 52 minor ones. The pattern is industry-wide: as AI models graduate from novelty to infrastructure, the gap between what users expect (always-on, instant, flawless) and what providers can deliver (complicated distributed systems running the most compute-intensive software ever written) is widening uncomfortably.

Success tax

But the Claude outages land differently in March 2026 than they would have a year ago. Anthropic now serves more than 300,000 business customers, and its number of large accounts — each representing more than $100,000 in annual run-rate revenue — has grown nearly sevenfold in the past year. Over 40% of professional developers now use AI-assisted coding tools in their production environments. When Claude goes dark, it is not hobbyists losing their conversational toy. It is engineering teams losing their pair programmer, content pipelines freezing mid-cycle, and customer support bots falling silent while ticket queues swell. Single-vendor dependency creates a productivity wall: if your AI is hard-coded to one provider, their downtime is your operational crisis.

The trouble is that the frontier AI providers are scaling demand and infrastructure simultaneously — and demand is winning. Anthropic has committed a staggering amount of capital to closing the gap. The company has access to over one gigawatt of capacity coming online in 2026 through Google Cloud alone, backed by a commitment to deploy up to one million TPUv7 Ironwood chips. A separate $50 billion partnership with neocloud provider Fluidstack will build custom data centers in Texas and New York, scheduled to go live throughout 2026. Add in Amazon's Project Rainier — 500,000 Trainium2 chips scaling to one million — and a $30 billion Microsoft Azure commitment, and Anthropic's total compute pipeline approaches multi-gigawatt scale, capacity hitherto available only to the largest hyperscalers. It is, by any measure, an extraordinary infrastructure splurge for a company that was a 30-person research lab three years ago.

Routing around the outage

Yet raw capacity alone does not solve the reliability problem, and enterprises are not waiting for Anthropic (or OpenAI, or Google) to achieve five-nines uptime on their own. A nascent but fast-growing category of infrastructure — the LLM gateway — has emerged to abstract away the single-provider bottleneck. AI infrastructure breaks before models do, and relying on a single LLM provider creates operational risk that enterprise gateways solve with seamless failover and multi-provider routing. Companies like Bifrost, LiteLLM, Cloudflare, and Kong now offer routing layers that sit between applications and model APIs, automatically redirecting requests to healthy providers when the primary goes down. According to IDC's 2026 AI and Automation FutureScape, by 2028 70% of top AI-driven enterprises will use advanced multi-tool architectures to dynamically manage model routing across diverse providers.

The logic is straightforward; the execution is not. Switching from Claude to GPT-5.3 mid-workflow means reformatting prompts, recalibrating output quality, and accepting that the backup model may handle certain tasks materially worse. Rather than maintaining identical capabilities across multiple providers, graceful degradation — keeping critical functions running at reduced capacity — is proving more practical than perfect redundancy. As one enterprise AI architect put it, the goal is not seamless substitution but triage: which workflows absolutely cannot fail, and what is the minimum viable fallback for each?

Deloitte's 2026 State of AI survey found that technical infrastructure readiness among enterprises sits at just 43%, with governance trailing at 30%. PwC's 2026 AI Agent Survey found that only 34% of enterprises say their AI programs produce measurable financial impact, and fewer than 20% have mature governance frameworks in place. The companies treating AI as a utility — building redundancy, monitoring provider health, maintaining prompt libraries for multiple models — are still a minority. Most organizations are running production workloads on a single provider and hoping the status page stays green.

Anthropic's gigawatt-scale infrastructure investments will, eventually, ease the supply-side pressure. But the deeper lesson of Claude's rocky March is structural: the AI industry has built extraordinarily powerful models and plugged them into mission-critical enterprise workflows without developing the reliability culture — the runbooks, the failover protocols, the multi-vendor architectures — that every other category of enterprise infrastructure takes for granted. The companies that figure out that plumbing first will find themselves with a formidable competitive advantage. Everyone else will keep refreshing the status page. ■

For more, join 75,000 subscribers getting tech's favorite brief here