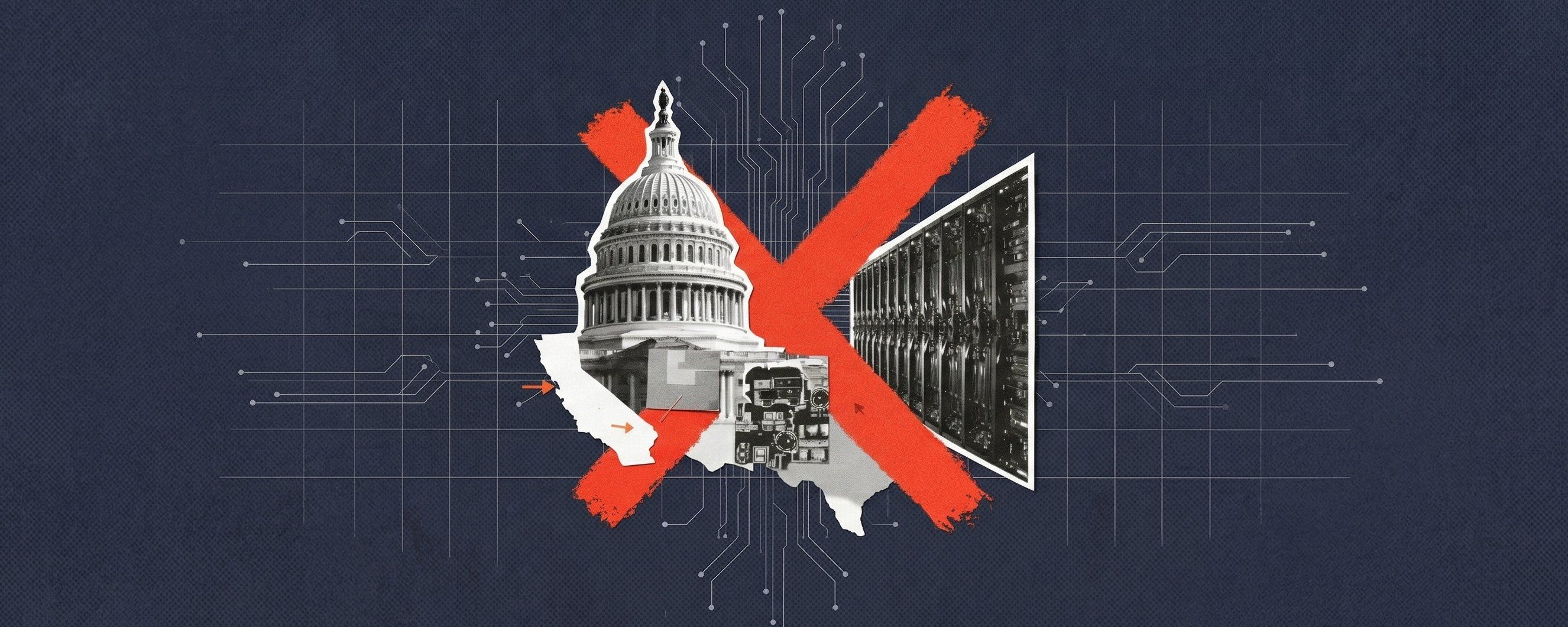

THE TRUMP ADMINISTRATION'S long-awaited blueprint for governing artificial intelligence, released on Friday, spans seven pillars, 2,000-odd words, and an unmistakable thesis: Washington should set the rules — and the rules should be few. The framework urges Congress to adopt a federally unified, innovation-oriented regime centered on preemption of state AI laws and a "light-touch" regulatory approach. It is the most detailed articulation yet of an administration that reckons the greater risk to American competitiveness is not too little regulation, but too much of it, in too many places.

The framework stems from an executive order Trump signed in December that blocked states from enforcing their own regulations around artificial intelligence. That order gave the Commerce Department ninety days to identify burdensome state laws — and directed the Attorney General to establish a litigation task force to challenge state AI statutes on grounds of unconstitutional regulation of interstate commerce. The legislative blueprint now arriving on Capitol Hill is, in effect, the policy complement to that legal offensive: a set of recommendations designed to give Congress something affirmative to pass while the DOJ works to tear down what states have already built. The timing is no coincidence. In 2025, more than 1,200 AI-related bills were introduced across all fifty states, with 145 enacted into law. Colorado, California, Texas, and Utah have all passed comprehensive AI statutes — covering everything from algorithmic discrimination and transparency requirements to frontier model safety disclosures. For an industry building general-purpose technology across state lines, the compliance surface was expanding fast.

Fifty shades of preemption

But the framework's most consequential section is not about what it proposes; it is about what it prohibits. The document calls on Congress to preempt state laws that regulate AI development outright, prevent states from penalizing AI developers for third-party misuse of their models, and bar states from burdening lawful AI-enabled activity. The carve-outs are narrow: states may still enforce generally applicable child-protection statutes, zoning laws for data center placement, and rules governing their own procurement. Everything else — the bias-mitigation mandates, the impact assessments, the transparency disclosures that make up the core of Colorado's AI Act and California's SB 53 — falls squarely within the federal preemption crosshairs.

The framework's other pillars tread safer political ground. Child safety provisions — parental controls, age-assurance requirements, limits on data collection from minors — appeared designed to appeal to AI-wary members of both parties in a midterm year when chatbot companionship apps targeting teenagers have become a bipartisan source of parental alarm. The document also pledges to shield residential ratepayers from electricity-cost increases driven by new data center construction (a shrewd concession, given that AI infrastructure was already becoming a flashpoint in energy-constrained communities), while proposing to streamline federal permitting so developers can generate power on-site.

On intellectual property, the administration performed what can only be described as a carefully choreographed punt. The framework states that the administration believes training AI models on copyrighted material does not violate copyright law, but acknowledges contrary arguments and defers the question to the courts. Dozens of lawsuits from writers, publishers, visual artists, and music labels remain pending. Congress is urged not to legislate on the fair-use question itself, but rather to consider collective-licensing frameworks — a mechanism for rights holders to negotiate compensation without antitrust liability, albeit without specifying when or whether such licensing would be required. It is the kind of construct that gives everyone a talking point without giving anyone an answer.

Then there is the anti-censorship provision — arguably the most politically charged section and one that lands differently three weeks after the administration blacklisted Anthropic. The framework recommends that Congress prevent federal agencies from coercing AI providers to alter content based on ideological agendas, and provide a means for Americans to seek redress for government censorship on AI platforms. Read in isolation, it is a reasonable First Amendment argument. Read against the backdrop of Trump ordering every federal agency to cease using Anthropic's technology after the company refused to let the Pentagon use its models without restrictions on mass surveillance and autonomous weapons, and the provision starts to look less like a principle and more like a precedent — one that draws the line at government influence over AI outputs while asserting expansive government authority over AI inputs.

Notably, the framework recommends against creating any new federal rulemaking body for AI, instead maintaining a sector-specific approach through existing regulators. That is a significant structural choice. The EU has its AI Office. The UK has its AI Safety Institute. The United States, under this blueprint, would have no centralized AI regulator at all — just a constellation of agencies applying old mandates to new technology, coordinated (loosely) by the White House Office of Science and Technology Policy.

House Republican leaders swiftly endorsed the framework, pledging bipartisan cooperation. But it has already been criticized by Democrats like Rep. Josh Gottheimer of New Jersey, who called it insufficient on accountability, and California Governor Gavin Newsom's office accused Trump of trying to gut state consumer protections. Many in the AI policy space doubt any legislation can pass before the November midterms — and Trump has already urged Republican lawmakers to prioritize his voter-ID bill above all else ahead of November. Senator Ted Cruz's earlier attempt to impose a ten-year moratorium on state AI law enforcement was defeated by a nearly unanimous Senate vote, suggesting that even within the president's own party, the appetite for sweeping preemption has limits.

The question now is whether this framework becomes legislation or merely a positioning document — a declaration of intent that shapes the Overton window for AI governance without ever passing a floor vote. For the technology companies that have lobbied hard against state regulation, the blueprint offers exactly what they wanted to hear. For the states that have spent two years constructing their own safety architecture, it reads like a demolition order. And for the millions of Americans whose jobs, credit decisions, and healthcare recommendations are increasingly shaped by algorithmic systems, the framework offers a single, clarifying promise: the guardrails, if they come at all, will be light. ■

For more, join 75,000 subscribers getting tech's favorite brief here