Even AI losers are winners

When Musk dissolved xAI on Wednesday, the cluster it left behind was already leased to one of its rivals

On the afternoon of Wednesday, May 6th, Dario Amodei, the chief executive of Anthropic, walked onto the stage at his company's developer conference in San Francisco to explain why the AI lab he co-founded had spent the morning leasing compute from a direct competitor in the model race. Earlier that day, Anthropic had announced an agreement to take the entire output of SpaceX's flagship Tennessee data center, a 300-megawatt cluster known internally as Colossus 1 and originally built to power Grok, the chatbot belonging to Elon Musk's xAI. Revenue and usage at Anthropic had grown eightyfold in the first quarter on an annualized basis, Amodei told the audience, against an internal plan that had assumed tenfold. "That," he said, "is the reason we have had difficulties with compute."

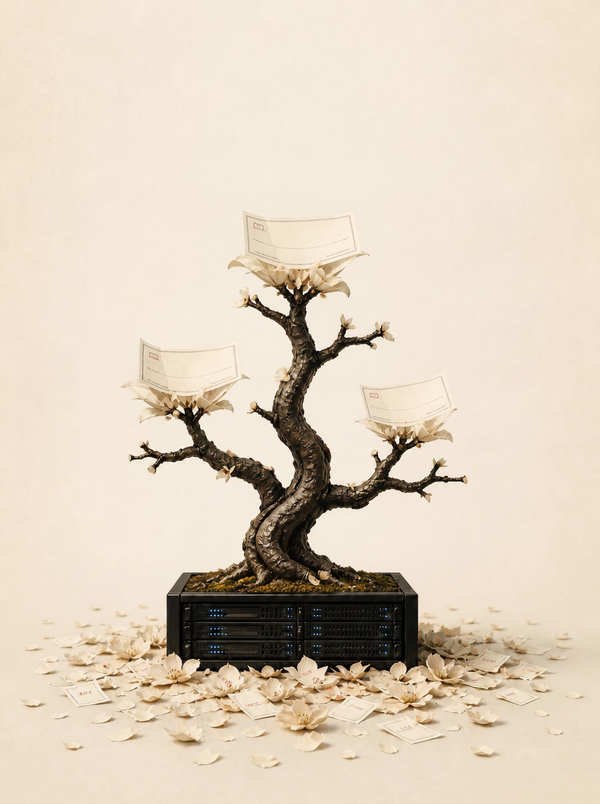

Hours later, Musk dissolved xAI as a separate company, folding its products into SpaceX and rebranding them SpaceXAI. The cluster that Musk had launched in Memphis in 2024 with the stated ambition of training a frontier model that could compete with OpenAI and Anthropic was now an Anthropic facility in everything but name. xAI's training had moved to Colossus 2, the follow-up project, and the rent on Colossus 1 was collected the same day. For the AI capital-expenditure thesis that has hung over hyperscaler earnings calls for two years, Wednesday was the structural answer the bear case had been waiting to confront.

Microsoft, Amazon, Google, and Meta are collectively on track to spend more than $300 billion on AI infrastructure in 2025 alone, with similar commitments queued for 2026. The bear case on that spend has remained essentially the same question across every quarterly earnings cycle since 2023. What happens if the model bet does not pay off — if a given hyperscaler's models do not improve fast enough, if the revenue does not arrive at the scale required to underwrite the capex, or if a particular lab is reorganized away from the strategy that justified the build?

What Wednesday demonstrated is that the compute itself does not strand when the model bet behind it changes. xAI is the cleanest case study available: a frontier-model lab founded in 2023 specifically to compete with OpenAI and Anthropic, with billions in capex behind it, has been functionally absorbed into its parent company while its flagship cluster has been re-papered as a lease to one of the rivals it was built to beat. None of that capex was wasted, because the chips, power, water rights, and interconnect at Colossus 1 retain their value as long as somebody at the frontier still needs them, and on Wednesday that somebody was Anthropic.

The reason Colossus 1 found a tenant within hours rather than months is that demand for AI compute is running well ahead of any one company's plan. A lab growing eightyfold a quarter against a tenfold internal plan is not in a position to be choosy about counterparties. It will take 5 gigawatts from Amazon, a similar agreement from Google and Broadcom, $30 billion from Microsoft, and 300 megawatts from a counterparty whose chief executive spent the last two years describing it as a competitor. The lab is also in talks to raise capital at a $900 billion valuation that would make it worth more than OpenAI, and Amodei described the current pace of expansion as "just crazy" and "too hard to handle." Demand of that magnitude will fill whatever capacity gets built, regardless of which operator does the building.

A frontier-model bet is venture in shape — speculative, binary, vulnerable to a single rival running away with the category. An infrastructure bet is not, as long as some lab in the race continues to grow into the available compute. The model is the upside option; the compute is the underlying asset, and the asset has a tenant whether or not the option pays off.

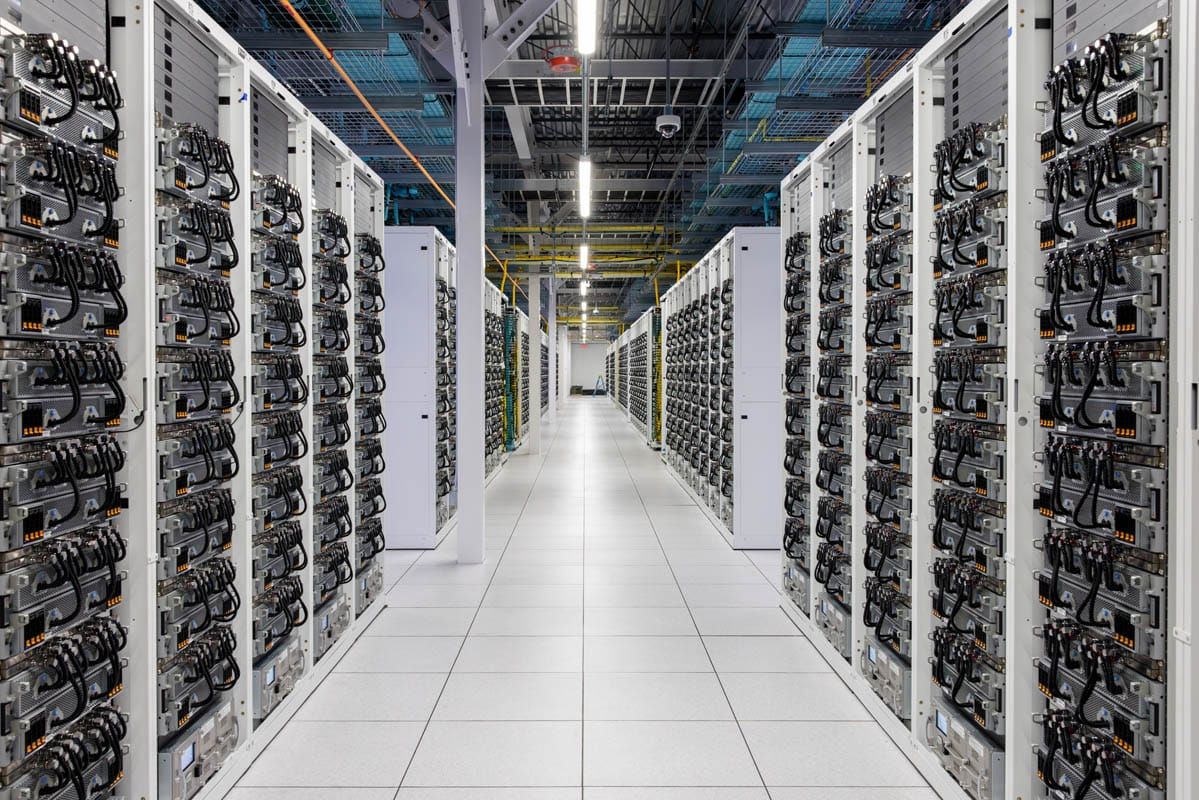

Compute is not perfectly fungible: older GPUs depreciate fast, network architecture matters, and software stacks carry months of lock-in. But at the current frontier, where the chips are recent and the architectures are converging on the same Nvidia stack, fungibility is high enough to do the structural work the capex thesis needs. Colossus 1 houses hundreds of thousands of advanced Nvidia processors of the kind every frontier lab is currently using. The hardware does not have to be perfectly fungible to retain value; it has to be fungible enough to find a tenant before the depreciation curve overtakes the lease.

At the operator level, SpaceX is the proof: the company is preparing for what filings have suggested is an imminent and blockbuster initial public offering, and the prospectus will not need to argue that SpaceXAI is going to beat Claude. It will need to argue that Colossus 1 has an anchor tenant at a $900 billion valuation, that Colossus 2 is online and training, and that the option to buy Cursor for $60 billion and the planned $119 billion Texas chip-fabrication facility called the Terafab are coming online behind them. That is a balance sheet a roadshow can sell, and it can be sold without ever winning a model.

Inflection, founded in 2022 to compete with OpenAI on consumer AI, was effectively absorbed into Microsoft in 2024 when the company hired most of its team and licensed its technology. xAI, founded in 2023 to rival OpenAI and Anthropic, was on Wednesday folded into SpaceX. Anthropic remains the only frontier lab founded after OpenAI that still operates as an independent company, and its compute roadmap now reads as a list of contracts with the same hyperscalers and infrastructure operators its models compete with on usage. The frontier of the industry is increasingly an arrangement in which a small number of capital-rich operators own the hardware, and the labs doing the model work pay them rent.

The bear case on AI capex has been that the spend is venture in scale and binary in outcome, and Wednesday revised both halves. The scale is still venture, but the outcome, increasingly, is not, because even when an operator winds down the model team a cluster was built for, the cluster itself keeps earning. Demand for compute has decoupled from the question of which lab is doing the computing, and Wall Street has been waiting for evidence that the AI capex bet de-risks against single-lab failure. It now has one.