To rival Nvidia, Google surrenders the moat

For a decade, the TPU's power was that it only worked inside Google; that is now becoming unsustainable

AMIN VAHDAT has spent a decade helping build what Google calls a "tech island" — an end-to-end AI compute stack in which TPU silicon, Google data centers, and Google's own orchestration software all depend on one another. At Google Cloud Next in Las Vegas this week, he and his colleagues explained why Google has decided to abandon it.

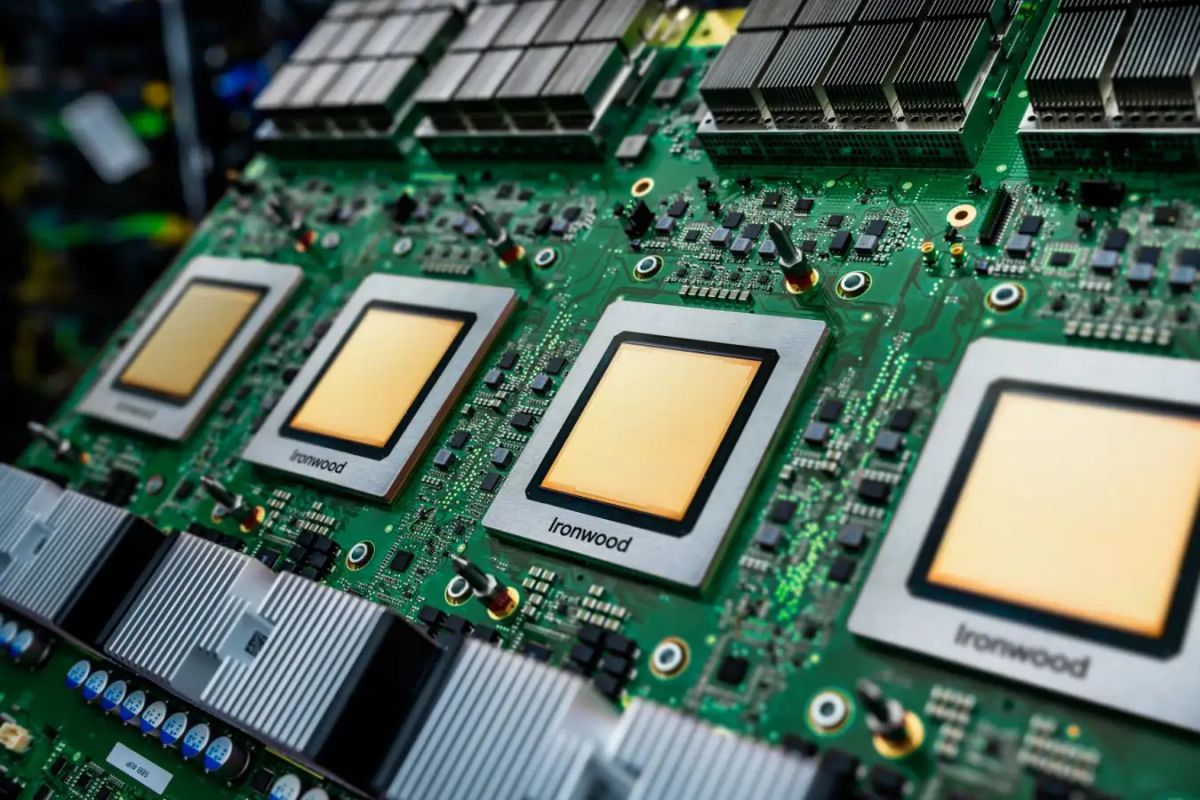

The announcement was ostensibly about two chips: the TPU 8t, tailored for training AI models, and the TPU 8i, built for running them after they have been trained. The split itself is news — Google has previously built general-purpose TPUs and resisted dedicated inference silicon — and the claimed gains (124% and 117% more performance per watt, respectively, over the prior generation) are meant to blunt Nvidia's long-standing lead. Behind the hardware sits a more consequential set of concessions. Google is now letting Anthropic run TPUs in Anthropic's own data centers rather than Google's; it has enabled customers to use PyTorch and third-party schedulers rather than its own orchestration stack; and it has signed multibillion-dollar deals with Meta, Citadel Securities, and (in advanced talks) G42. Alongside an existing October commitment to supply Anthropic with up to one million TPUs and a separate 3.5-gigawatt Broadcom-built TPU pact starting in 2027, they amount to a strategic concession.

Drawbridge down

The trouble is that the island was, for a decade, the whole point. Jeff Dean started building what became the TPU in 2013 precisely because off-the-shelf silicon could not meet Google's own demands; the chip's appeal was that it was optimized for workloads only Google ran. Vertical integration let DeepMind researchers co-design hardware with model architecture, which is how Gemini 3, released in November and trained entirely on TPUs, reached benchmark parity with frontier GPU-trained models. It is also how Google built what SemiAnalysis reckons is the only hyperscaler ASIC program besides AWS Trainium with a serious shot at scale. The integration was the moat.

This article is for Vector members. Start a 7-day free trial to keep reading.

Start your free trial